TVQA+ Dataset - Download and Description

1. Annotations (QA pairs, bounding boxes etc)

Download link: tvqa_plus_annotations.tar.gz [6.4MB], tvqa_plus_annotations_preproc_with_test.tar.gz [7.0MB]

md5sum: 2b834dcc993129b1ec98d76f5c2c9f83, b2683f43c20d567f5fb1fa17887affcb

tvqa_

| File | #QAs | Usage |

|---|---|---|

| tvqa_ |

23,545 | Model training |

| tvqa_ |

3,017 | Hyperparameter tuning |

tvqa_

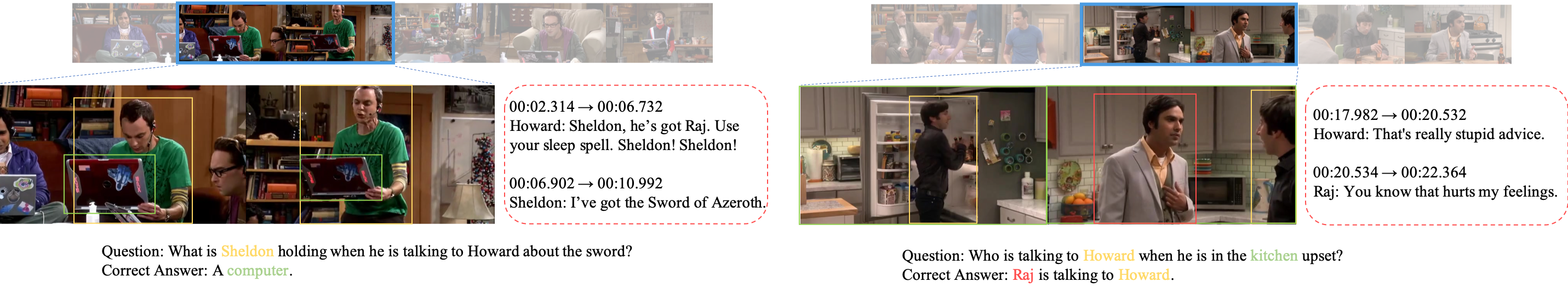

TVQA+ differs from TVQA dataset in three ways: 1) the questions in TVQA+ is a subset (The Big Bang Theory). of TVQA. 2) TVQA+ has frame-level bounding box annotations for visual concept words in questions and correct answers. 3) TVQA+ has better timestamps annotations. Please refer to our paper for more details.

Each JSON file contains a list of dicts, each dict has the following entries:

| Key | Type | Description |

|---|---|---|

| qid | int | question id, this entry stays the same as the TVQA dataset. |

| q | str | question |

| a0, ..., a4 | str | multiple choice answers |

| answer_idx | str | answer index |

| ts | list | timestamp annotation. e.g. [0, 5.4] denotes the localized span starts at 0 seconds, ends at 5.4 seconds. Note the values here are refined timestamps, which are different from TVQA dataset. |

| vid_name | str |

name of the video clip accompanies the question. The videos are named following the format

'{show_ |

| bbox | dict | A set of bounding boxes associated with the annotated frames. The keys are frame NO. for frames extracted at 3 FPS. |

A sample of the QA is shown below:

{

"answer_idx": "1",

"qid": 134094,

"ts": [5.99, 11.98],

"a1": "Howard is talking to Raj and Leonard",

"a0": "Howard is talking to Bernadette",

"a3": "Howard is talking to Leonard and Penny",

"a2": "Howard is talking to Sheldon , and Raj",

"q": "Who is Howard talking to when he is in the lab room ?",

"vid_name": "s05e02_seg02_clip_00",

"a4": "Howard is talking to Penny and Bernadette",

"bbox": {

"14": [

{

"img_id": 14,

"top": 153,

"label": "Howard",

"width": 180,

"height": 207,

"left": 339

},

{

"img_id": 14,

"top": 6,

"label": "lab",

"width": 637,

"height": 354,

"left": 3

},

...

],

"20": [ ... ],

"26": [ ... ],

"32": [ ... ],

"38": [ ... ]

}

}

2. Subtitles

Download link: tvqa_plus_subtitles.tar.gz [5MB]

md5sum: b2682dafda87de104941e992219fb639

tvqa_

3. Video features

3.1 ImageNet feature, download link: tvqa_

tvqa_ file contains a HDF5 file.

The 2048D features are extracted using ImageNet pretrained ResNet-101 model, at pool5 layer.

For each clip, we use at most 300 frames. If the number of frames exceeds, downsampling is applied:

downsample_

To download the files stored in Google Drive, we recommend you to use command line tools such as

gdrive.

3.2 Visual concepts feature, download link:

det_

det_ file contains a Python dict with 'vid_name' as keys, each value is a list of sentences,

each sentence contains the detected objects and attribute labels of a single frame from a

modified Faster R-CNN trained on Visual Genome. Note this feature is also downsampled

as the ImageNet feature.

3.3 Regional visual feature: please follow the instructions here to do the extraction. Currently, we do not plan to release it, due to its size.

4. Video frames

Download link: tvqa_video_frames_fps3_hq.tar.gz [43GB], please fill out this form first

The video frames are extracted at 3 frames per second (FPS), we show a sample of them below. To obtain the frames, please fill out the form first. You will be required to provide information about you and your advisor, as well as sign our agreement. The download link for the video frames will be sent to you in around a week if your form is valid. Please do not share the video frames with others.